Shivam Shandilya

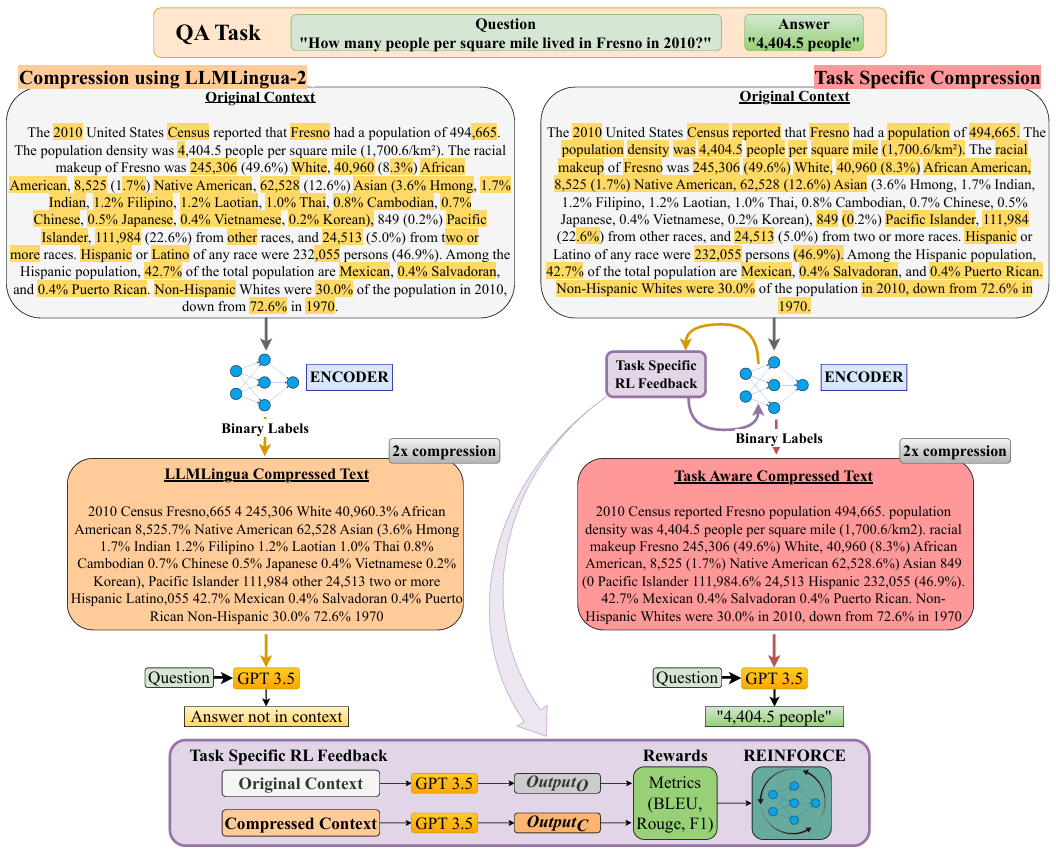

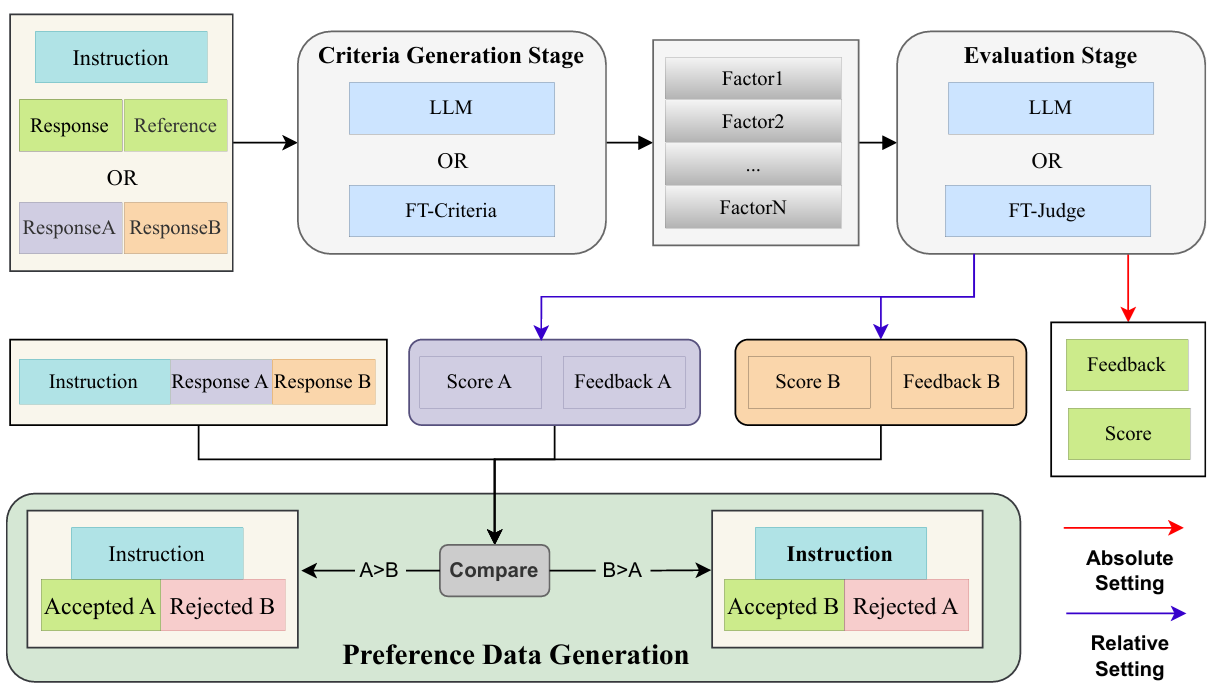

Hi. I am currently working as a Research Fellow at Microsoft Research Lab (MSRI) in Bangalore, India. My research focuses on developing efficient models and frameworks for large language models (LLMs). I have worked on projects such as Task-Aware Prompt Compression and the Self-Assessing LLM framework, aiming to enhance efficiency and performance in various NLP tasks.

Prior to my current role, I completed my B.Tech in Electrical and Electronics Engineering from Birla Institute of Technology, Mesra. During my undergraduate, I contributed to the PyZombis project under the Python Software Foundation during Google Summer of Code, 2022. I also spent a summer as a research intern at CoEAMT, IIT Kharagpur.

My research interests broadly lie in the intersection of NLP and efficiency, and I am passionate about exploring ways to make language technologies more accessible and effective.